Tencent has developed a '3D World Generator' that can be used by game developers and embodied intelligence

The production of 3D content has long been a high threshold job.

To create a game level, a 3D modeler needs to gradually set up the scene, arrange objects, and debug lighting. To create a training environment for embodied intelligence, it is necessary to twin the real-world digital environment. Both of these tasks used to be manually completed by professionals, which was time-consuming and laborious.

From 'Pinching Objects' to' Creating the World '

The previous generation of 3D generative models mostly focused on "single objects" - if you give it a sentence, it generates a chair, a car, or a tree. But do you want to generate a complete and explorable 3D scene? Sorry, I can't do it.

The core upgrade of HY-World2.0 is to expand the ability from "generating objects" to "generating worlds". It can not only generate individual 3D models, but also generate the entire 3D space - including people, objects, and scenery - together.

Specifically, it supports three input modes: text, images, and videos. You input a text description of 'a sunny city street', which generates an entire explorable 3D scene, not just a tree or a street lamp.

And the generated assets are interactive and editable. You can continue to adjust, add things, and change the layout on the generated results, instead of a static file that is "fixed".

How did it do it technically?

Let's talk about a few core upgrades:

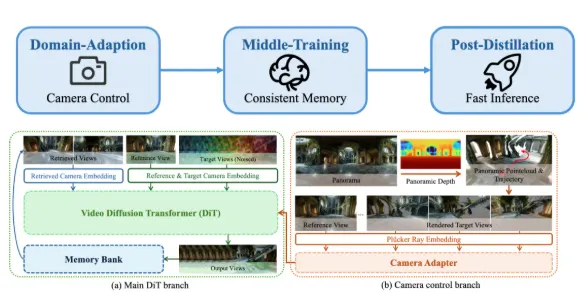

1. HY Pano-2.0: 360 degree panoramic mapping

It uses an end-to-end implicit learning scheme to complete 360 degree panoramic mapping without camera parameters. Translated into human language, it means: You don't need to provide professional shooting parameters, it can calculate the spatial structure from the input content on its own. This design lowers the threshold for use and allows ordinary users to generate high-quality panoramic 3D content.

2. Spatial Agent: Intelligent Planning of Roaming Trajectory

Tencent's self-developed spatial agent technology combines visual language model (VLM) and navmesh representation. Simply put, it can not only generate static scenes, but also understand the spatial structure of the scene and plan reasonable roaming paths. The generated 3D scene is not just a static asset placed there, but also has a "road" where you can let the character walk inside.

3. WorldStereo: Cross regional Consistency

When you add a newly generated area to an existing scene, it ensures that the new area is geometrically and visually consistent with the original area. There will be no awkward issues such as' new room and corridor size not matching 'or' inconsistent lighting direction '.

4. WorldMirror 2.0: Realistic Scene Reproduction

It can also replicate real scenes, predict dense point clouds and camera parameters at once, and achieve high-precision digital twin construction. This ability is very valuable for embodied intelligence training - you can train robots in a digital twin environment, and then validate them in a real environment after training.

Where is it stronger than its competitors?

The official specifically mentioned that compared to mainstream models such as Google's Genie3, the key breakthrough of Hybrid 2.0 is that the generated assets have real physical collision properties and support free exploration of character modes.

This is important. Models like Genie3 generate 3D content that leans towards "visual display", and the generated content is more like a "model displayed in a shop window". The physical properties are not realistic enough and cannot be directly used to create game levels. The assets generated by Hybrid 2.0 are "directly usable" - they can be used within the game engine, with collisions, paths, and exploratory features.

In addition, it supports exporting in various formats such as Mesh, 3DGS, point cloud, etc., allowing direct integration with mainstream game engines such as Unity and UE. For game development teams, this means that the first step in 3D content creation can be directly completed using AI, rather than manually modeling from scratch.

What does it mean?

Tencent's move towards hybrid technology is a very practical direction: pushing 3D content creation towards practicality.

The ability to 'create worlds' is a real requirement for game level prototyping and the construction of embodied intelligent simulation environments. The threshold has been lowered, and more teams can use this ability - not only can large companies create 3A game levels, but small and medium-sized teams can also use AI to quickly set up environments.

This is probably the core goal of Tencent's open-source hybrid technology: to open up its capabilities and enable the entire industry ecosystem to use them together.