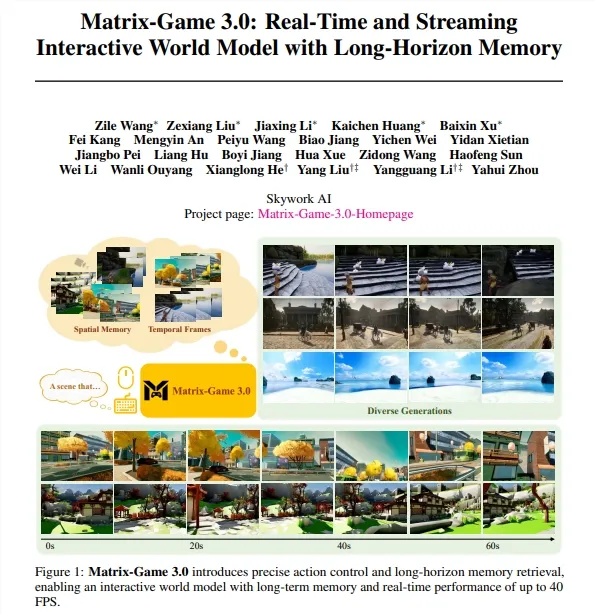

720p 40 frame real-time generation, AI can finally 'create the world' instead of just 'create fragments'

This breakthrough is quite interesting. The Skywork AI team has released Matrix Gate 3.0, which achieves real-time video generation at 40 frames per second for the first time in 720p high-definition resolution. More importantly, it solves the long-standing problem of "amnesia" in AI video generation.

This is not just about 'generating faster', it's about evolving from 'generating fragments' to' building the world '.

Why do AI videos suffer from 'amnesia'?

Let's talk about a technical issue first. For a long time, AI video generation models have often experienced spatial structural confusion or style drift when processing long sequence interactions due to a lack of effective memory.

What does that mean? For example, if you let AI generate a virtual world, you can walk and explore inside. When you walk around and return to the starting point, the AI may have "forgotten" what it was originally like - the scene details have changed and the style is not consistent. This is' amnesia '.

This question is very fatal. If you want to build an interactive virtual world, and users revisit the old place and find that the scene has completely changed, the experience will collapse.

Matrix Gate 3.0 breaks this bottleneck by introducing a camera aware memory retrieval mechanism. The system can accurately retrieve historical images based on the current camera pose, and also adopts a unified self attention architecture to jointly model long-term memory, recent history, and current predicted frames in the same space.

Simply put, AI has a 'long-term memory' that can remember previously generated content and maintain spatiotemporal consistency.

Training AI to Understand the Physical World with 3A Game Data

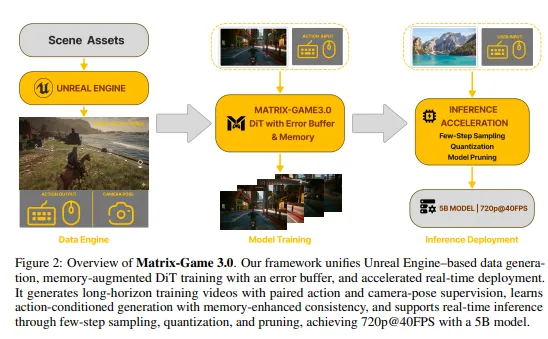

In order to enable AI to deeply understand the physical logic of the real world, the R&D team has built a large-scale "data factory".

This data factory has three sources:

Firstly, virtual reality is generated synchronously. We have developed the Unreal Gen platform using Unreal Engine 5 (UE5), which can automatically generate movie level interactive videos containing over 100 million character combinations. This is self-made data.

Secondly, 3A large-scale automated data collection. The system supports large-scale automatic recording of high-quality interactive data from top games such as Grand Theft Auto 5 and Cyberpunk 2077. This is learning from the best games.

Thirdly, multi-dimensional real scene supplementation. Integrated over 10000 real-world 4K sequences, covering diverse scenarios such as indoor, urban, and aerial photography. This is learning from the real world.

The combination of these three types of data allows AI to simultaneously understand the construction logic of the virtual world and the physical laws of the real world. This training strategy is very clever

How is the speed achieved? Pruning+quantification

To meet the requirement of ultra-low latency for real-time interaction, Matrix Gate 3.0 has been deeply optimized on the inference architecture.

The team adopted a multi-stage autoregressive distillation strategy and combined it with VAE decoder pruning technology (with a pruning rate of up to 75%), which increased the decoding speed by more than 5 times.

What is pruning? It means cutting out the less important parameters in the model. The pruning rate is 75%, which means only 25% of the parameters are retained, but the performance remains basically unchanged. This requires very precise techniques, and important parameters cannot be cut incorrectly.

In addition, through methods such as INT8 quantization, the system further reduces computational overhead. Quantization is the process of converting floating-point numbers into integers, resulting in faster computation and less memory usage.

Final result: It can still run smoothly at a parameter scale of 5B, with a resolution of 40FPS at 720p.

The MoE model of 28B also demonstrated

In addition to the 5B version, the team also showcased a MoE model with a parameter scale of up to 28B.

MoE stands for Mixture of Experts, a hybrid expert model. The characteristic of this architecture is that the model is large, but only a part of the parameters are activated for each inference, so the inference speed is fast.

With the increase of model size, AI has shown stronger vitality in dynamic simulation, scene transition, and general generalization ability. This indicates that scale is still useful, but it requires a smart architecture like MoE to achieve.

What's the use of this thing?

Industry experts point out that the emergence of Matrix Gate 3.0 provides a key technological foundation for robot training, XR augmented reality, and next-generation immersive entertainment.

Imagine:

Robot training: Training robots in the virtual world generated by AI has lower costs and lower risks compared to training in the real world

XR Extended Reality: Real time generation of interactive virtual environments for a more immersive VR/AR experience

Immersive entertainment: games, virtual socializing, metaverse... all require real-time generation of interactive worlds

This marks a new stage in the evolution of AI from simple "fragment generation" to "real-time construction of interactive worlds".

Matrix Gate 3.0 achieves 720p 40 frame real-time video generation, solving the problem of "amnesia" in AI videos. Train with 3A game data and real scene data, prune and quantify to optimize inference speed. The 5B parameter runs smoothly, and there is also a 28B MoE version available. Training for robots XR、 Immersive entertainment provides a technological foundation.