In today's rapidly developing artificial intelligence technology, the application scenarios of large models are constantly expanding. However, how to efficiently deploy large models on end devices to achieve "fat reduction and muscle gain" has always been an important challenge facing the industry.

On February 10, 2026, Tencent's hybrid team announced the launch of a minimal model HY-1.8B-2Bit for consumer grade hardware. With the first industrial grade 2Bit quantization scheme, the equivalent parameter amount is compressed to 0.3B, the memory usage is only about 600MB, and the volume is even smaller than some commonly used mobile applications, bringing significant breakthroughs to the implementation of large models on the end side.

Technological breakthrough: 2Bit quantification overcomes the dual challenges of accuracy and volume

Quantification is a key technology in reducing model size and improving operational efficiency during model deployment. However, the lower the number of quantization bits, the greater the accuracy loss of the model. How to achieve ultimate compression while ensuring performance has always been an "impossible task" in the industry.

The Tencent hybrid team has abandoned the traditional PTQ (post quantization) strategy and instead adopted Quantitative Perception Training (QAT), combined with data optimization, elastic stretching quantization, and strategy innovation, successfully achieving high-precision output under 2Bit quantization.

Experimental data shows that HY-1.8B-2Bit performs on par with the 4Bit PTQ model version in core indicators such as mathematics, code, and science. This means that while significantly compressing the volume, the model still maintains strong "general ability".

Performance: Double the generation speed and adapt to various end side hardware

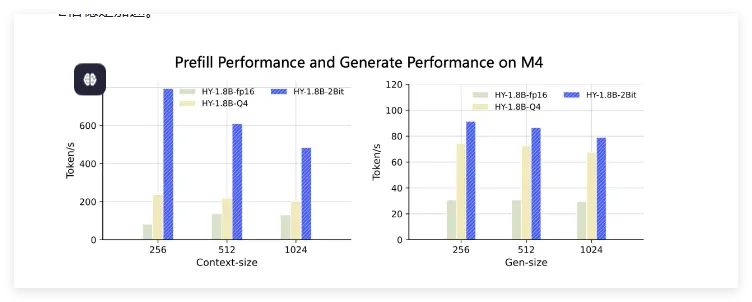

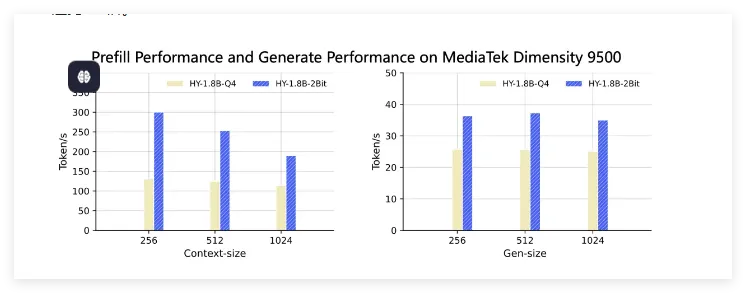

Thanks to its advanced compression technology, the performance of HY-1.8B-2Bit on real-world devices is remarkable. Compared with the original precision model, its generation speed has been improved by 2-3 times, as shown below:

MacBook M4: Within 1024 inputs, the first character delay achieves 3-8 times acceleration, and the generation speed maintains a stable improvement of more than 2 times.

Dimensity 9500: Compared to Q4 format, the first character delay is accelerated by 1.5 to 2 times, and the generation speed is accelerated by about 1.5 times.

In addition, HY-1.8B-2Bit also utilizes the long short thought chain capability of Hunyuan-1.8B-Innostruct, allowing users to flexibly switch according to task complexity, further enhancing the practicality and flexibility of the model.

Comprehensive thinking ability: flexible switching of long and short thinking chains to meet diverse needs

HY-1.8B-2Bit not only achieved breakthroughs in volume and speed, but also maintained a high level of thinking ability. By utilizing the long short thought chain capability of Hunyuan-1.8B-Innostruct, this model can automatically adjust its thinking mode based on task complexity, and can handle both simple question answering and complex reasoning with ease. This flexibility and adaptability make HY-1.8B-2Bit more widely applicable in end side AI applications.

Future layout: Reinforcement learning and model distillation to narrow the capability gap

At present, HY-1.8B-2Bit has provided GGUF-int2 format weights and completed adaptation on the Arm SME2 technology platform, which can be widely used in scenarios with high requirements for offline deployment and privacy, such as mobile phones, headphones, and smart homes. Tencent Hybrid stated that in the future, it will further narrow the capability gap between low bit models and full precision models through reinforcement learning and model distillation techniques, and promote the development of end-to-end AI to a higher level.

Conclusion: A new chapter in end-to-end AI has begun

The release of Tencent Hybrid HY-1.8B-2Bit not only provides new ideas for the implementation of large models on the end side, but also sets a new benchmark for the entire industry. With the continuous advancement of technology and the continuous expansion of application scenarios, we have reason to believe that end-to-end AI will usher in a broader development space.

The innovation of Tencent's hybrid technology undoubtedly injects new momentum into this process, and let us look forward to the bright future of end-to-end AI together.