Ranking earthquake: Chinese model reaches the top of global video generation for the first time

While the industry was still discussing the competitive landscape between OpenAI Sora and Google Veo3, a dark horse from China quietly rewrote the power map of AI video generation.

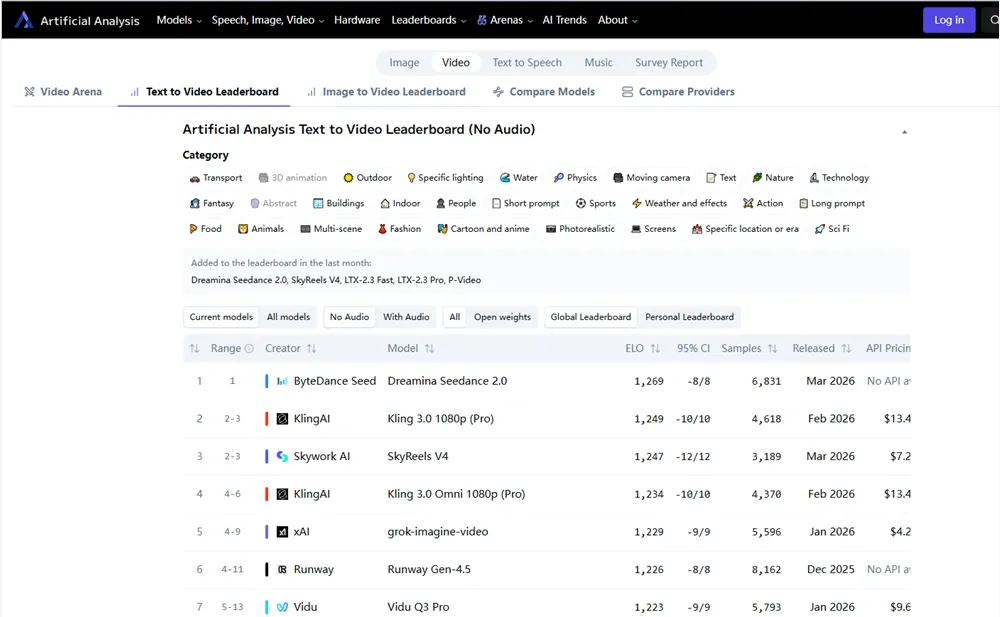

The latest independent blind test data released by the international authoritative evaluation organization Artificial Analysis shows that the Seedance 2.0 model launched by ByteDance has scored an astonishing score of Elo 1269 in the category of Text to Video, which not only ranks first, but also shows overwhelming advantages in the track of Image to Video, leaving behind a number of top international competitors.

It is worth noting that this ranking is entirely based on the "blind voting" of large-scale users, removing brand halo and directly reflecting the real human visual preferences and actual generation quality. The summit of Seedance 2.0 marks that Chinese AI companies have officially moved from "catching up" to "leading" in the field of multimodal generation technology.

Technical decoding: not just 'moving', but also 'physically real'

The reason why Seedance 2.0 was able to win in the cruel blind testing is not due to the improvement of a single dimension, but to the thorough reconstruction of the underlying architecture. Compared to the previous generation model, it has achieved three core breakthroughs:

Multimodal Unified Architecture: Unlike traditional staged generation, Seedance 2.0 supports mixed input of four modalities: text, image, audio, and video. This means that users can upload an image and an audio clip, and the model can directly generate high fidelity videos with synchronized mouth shapes and background matching, greatly reducing the creative threshold.

Native AV Sync: This is the biggest highlight of this upgrade. The model is no longer just a process of creating images and then dubbing them, but rather calculates audio waveforms natively while generating video pixels. This solves the common problems of "mouth shape mismatch" or "false background sound" in AI videos, achieving an immersive experience of audio-visual integration.

Director level control and physical simulation: Seedance 2.0 introduces a stronger physics engine simulation to address the biggest pain points of AI videos - "hallucinations" and "physical collapse". In complex interactive scenarios such as character running, object collision, and fluid motion, the model can maintain extremely high motion stability and logical consistency, and even support users to finely control camera motion (push pull shake).

Ecological Implementation: Empowering the Entire Link from Dreamina to CapCut

The victory of technical parameters ultimately serves the application. ByteDance did not put Seedance 2.0 on the shelf, but quickly integrated it into the huge creator ecology:

International version Dreamina (dreamina. capcut. com): Provides a web-based "one click generation" experience, allowing users to generate 1080p high-definition videos through text prompts without the need for local computing power.

CapCut full platform integration: The desktop and mobile versions have been fully integrated with the model, supporting free trials and paid subscriptions. This means that hundreds of millions of short video creators around the world can directly call the top AI capabilities in the editing software to achieve the second level transformation of "from concept to film".

This closed-loop strategy of "model+tool" makes Seedance 2.0 not just a technical demo, but also a productivity tool, directly reducing the cost and threshold of advertising marketing, film preview, and professional visual effects (VFX) production.

Industry Changes: Efficiency Revolution and Compliance Challenges Coexist

The global deployment of Seedance 2.0 is regarded by the industry as the "iPhone moment" in the field of AI video generation.

For creators: it will trigger an efficiency revolution. Previously, storyboard previews or special effects short films that required several days to produce can now be completed in minutes, and creators will focus more on the creativity itself rather than technical implementation.

Regarding the industry landscape: It strengthens the discourse power of Chinese enterprises in the field of multimodal AI, forcing giants such as Google and OpenAI to accelerate iteration.

Potential challenge: As the generated quality approaches reality, content compliance, copyright protection, and anti abuse mechanisms become key. In terms of ByteDance, it is said that the safety barrier will be optimized in the future to ensure that the technology is good.

Seedance 2.0 accurately hits this pain point with 1080p high resolution, physical realism, and low threshold experience. For developers and creators, now is the window for hands-on testing through Dreamina or CapCut. In the future, with the opening of APIs, Seedance 2.0 may become the infrastructure for enterprise level video generation, ushering in a new era of AI driven creativity.