Runway officially announced the highly anticipated Image to Video feature on the Gen-4.5 model. This update is not simply a model iteration, but clearly points to the core requirements of film and television level creation: longer story generation ability, precise shot control parameters, and maintaining highly coherent narrative and character consistency in multi shot switching. This feature is currently open to all paid plan users, marking a further advancement of AI video generation tools towards professional production streams.

The Image to Video feature of Gen-4.5 is officially defined as the "best video model in the world", with its core competitiveness focused on solving the four major pain points of current AI video creation:

Longer Stories

Breaking through the limitations of short clips, supporting the generation of longer and more logically complete video segments, providing a foundation for complex storytelling.

Precise Camera Control

Creators can accurately define motion parameters such as push, pull, shake, and move, bidding farewell to "gacha" generation and achieving director level visual control.

Coherent Narratives

Ensure logical coherence between the scene and actions on the timeline, reducing visual breakdowns or physical logical errors.

Character Consistency

This is the top priority of this update, which solves the stubborn problem of characters changing size and facial features in AI videos, ensuring that the protagonist's identity is unified across camera.

Workflow and Experience

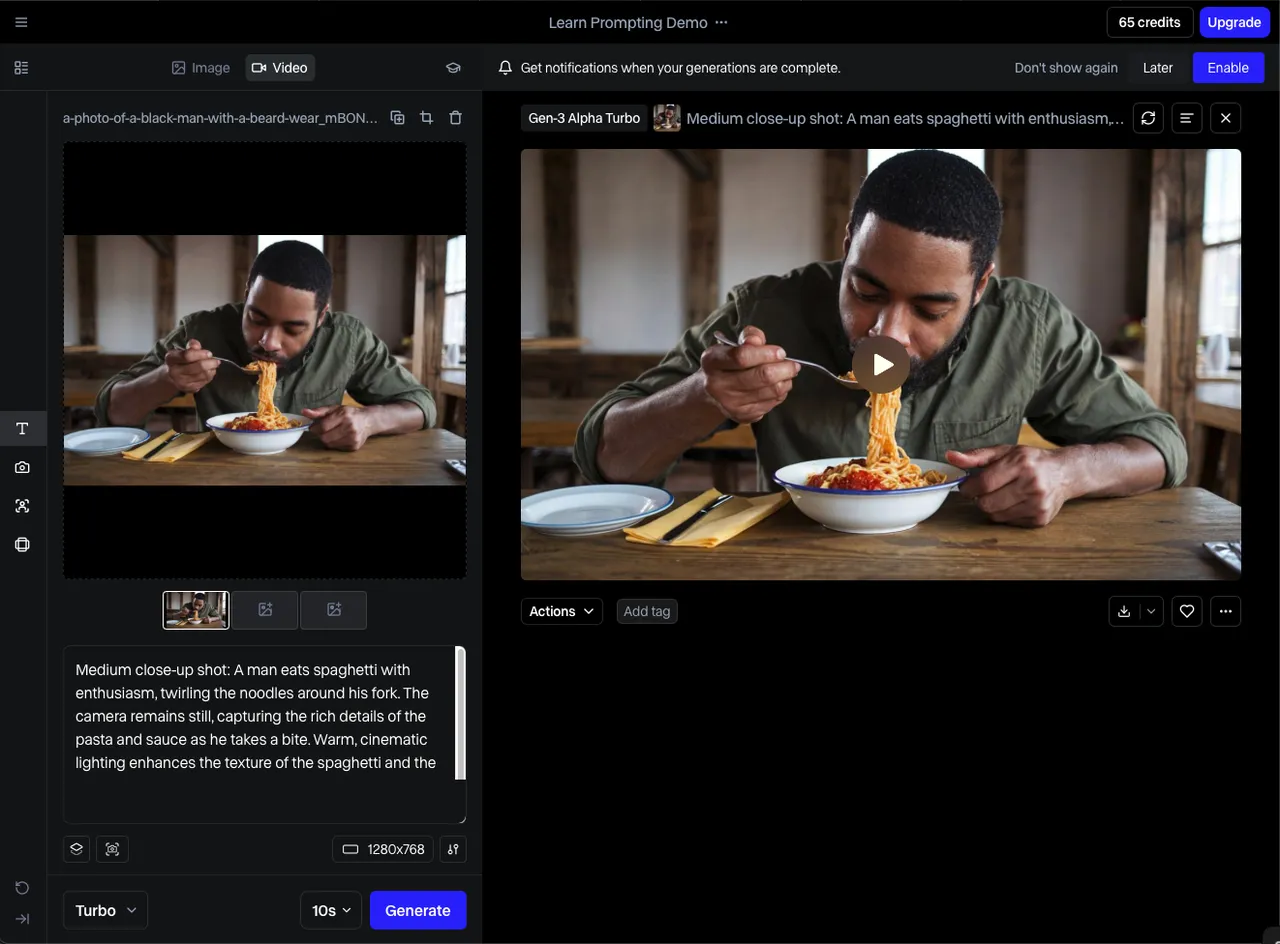

This update is directly open to paying users, which means that Image to Video has the stability of a production grade application. The typical workflow is already very mature:

Material upload: Users upload benchmark images as visual anchors for video generation.

Parameter settings: Fine tune the camera motion mode and motion amplitude in the control panel, and define the lens language.

Generate and Preview: Quickly generate preview clips to verify whether the dynamic effects meet expectations.

Iterative optimization: Fine tune prompt words or parameters based on the initial version results until a perfect shot is produced.

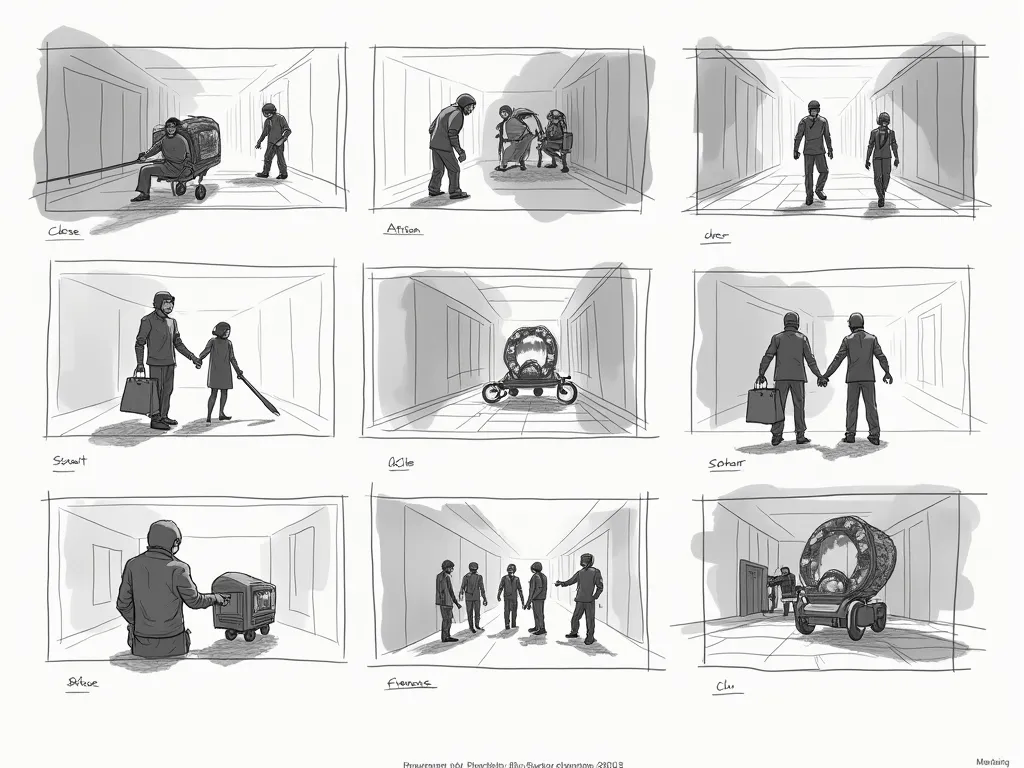

In Gen-4.5, camera control is not just about moving the perspective, but also a regulator of narrative rhythm. The storyboard shown in the above picture, from Close up to Long Shot, each scene selection carries a narrative function.

Through precise control of Image to Video, creators can clearly specify "low angle overhead shooting" to express the character's sense of oppression, or use "slow camera push" to emphasize the progression of emotions. This refined control over scenery, positioning, and motion parameters, combined with the model's maintenance of character consistency, enables AI generated videos to no longer be fragmented dynamic images, but rather coherent story segments that can be edited and assembled, greatly enhancing the visual credibility of "longer stories".