In early 2026, Meta officially released the open-source application Spatial Lingo, a mixed reality language learning tool based on the Quest headset that transforms real living environments into interactive language practice fields. By integrating spatial AI recognition technology with natural language processing capabilities, users can engage in immersive language conversations with virtual AI assistants in any scene at home, opening up a new language learning experience mode.

This application is currently only open in the United States and the complete code is released to the developer community in an open source format. Meta's move not only demonstrates the potential of mixed reality technology in the field of education, but also provides a technical reference template for the innovative development of language learning products.

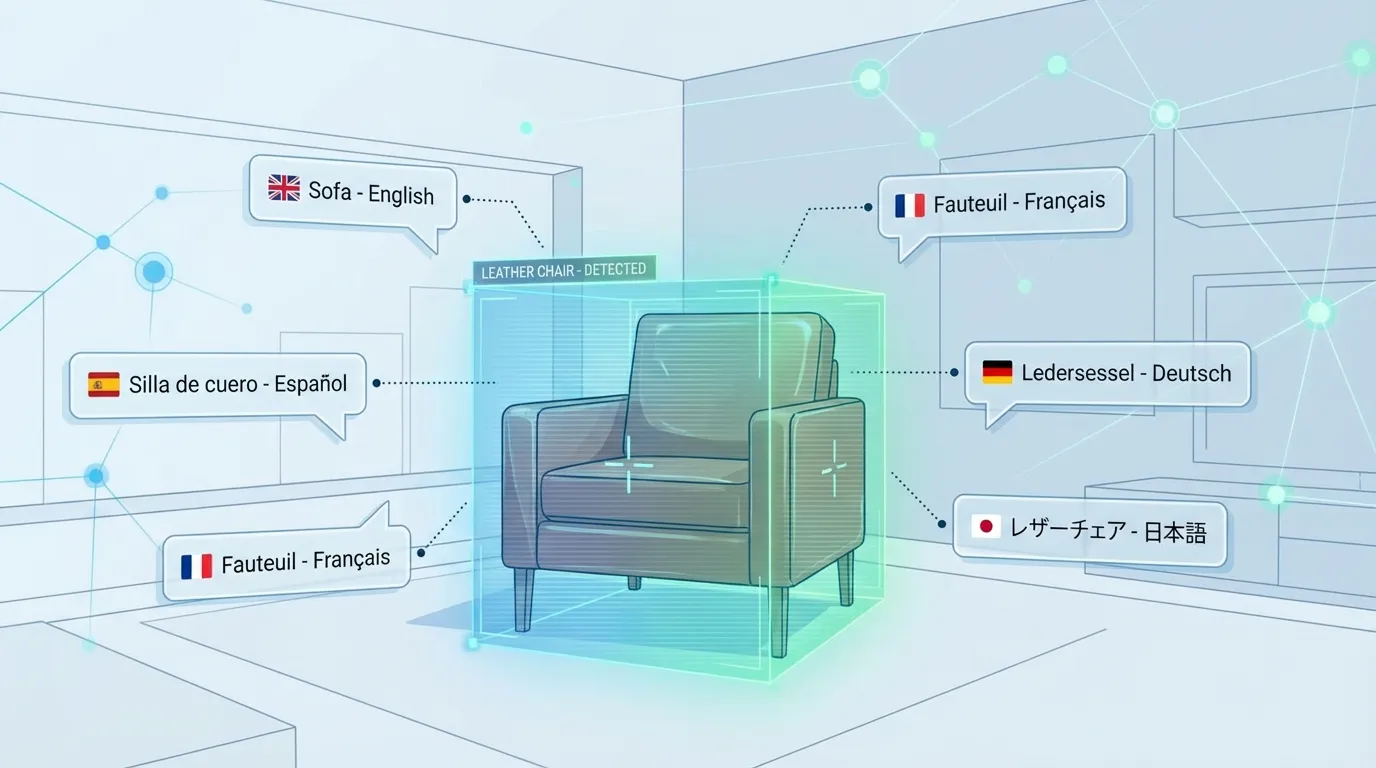

The core highlight of Spatial Lingo lies in the deep application of spatial object recognition capability. When users wear the Meta Quest headset, the application will scan the surrounding environment in real-time and recognize real objects such as sofas, tables, chairs, and lighting fixtures through computer vision technology. The system immediately triggers the AI dialogue engine to generate contextualized language practice content around the recognized items.

For example, when the system detects that the user is looking at a leather chair, the AI assistant will initiate a conversation: "This chair looks very comfortable, can you describe its material and color in the target language?" This contextualized learning method based on real scenes far exceeds traditional applications such as picture cards or virtual scene settings, allowing language practice to deeply integrate with daily life.

According to Meta's official introduction, the application utilizes Spatial Anchors technology to fix virtual dialog boxes in physical space, ensuring that the interactive interface remains stable when the user moves. This technological detail significantly enhances the coherence of mixed reality experiences, avoiding the immersive disruption caused by virtual element drift.

Unlike traditional language learning applications that rely on one-way input through a screen interface, Spatial Lingo builds a three-dimensional interactive experience. Users can freely move around in different spaces such as the living room, bedroom, and kitchen. The AI assistant will switch dialogue topics based on the environment - practicing food vocabulary in the kitchen, discussing reading topics in the study, and learning daily living expressions in the bedroom.

This design concept originates from the principle of "episodic memory" in language learning. When learning content is associated with a specific physical space, the brain is more likely to form long-term memory. Every time the user uses the target language in the same scene, they reinforce neural connections and ultimately establish a conditioned reflex of 'being able to blurt out relevant expressions when they see the sofa'.

The Meta technical team stated that the built-in speech recognition system of the application supports real-time feedback in multiple languages, which can correct pronunciation errors and provide grammar suggestions. The AI dialogue engine is driven by a large language model, which can understand contextual information, generate natural and smooth multi turn conversations, and avoid mechanized question answering modes.

As an open-source showcase app, Spatial Lingo's technical architecture is fully open to the developer community. Meta provides a detailed explanation of the implementation methods of core modules such as Spatial Mapping, Object Recognition, and Voice Interaction in the official documentation.

This strategy demonstrates Meta's intention to cultivate the ecosystem of mixed reality education applications. By providing a reusable technical framework, third-party developers can quickly build similar applications and expand to more fields such as mathematics tutoring, history teaching, vocational training, etc. The open-source code is hosted on the GitHub platform, which has attracted hundreds of developers to participate in optimization and feature extension.

Industry analysis points out that Meta's move is not only a demonstration of its technological strength, but also a strategic response to competitors such as Apple Vision Pro. In the increasingly fierce competition of space computing devices in 2026, building a developer ecosystem has become one of the core competencies of platform vendors.

Spatial Lingo is not the first VR language learning product on the market. There are already multiple mature applications such as IMMERSE, Language Lab, Lingo Quest in the Quest app store, and some products offer a hybrid mode combining live courses with AI tutoring by real teachers.

However, open source applications endorsed by Meta still have unique value. The developer community generally believes that the technical reference provided by Spatial Lingo is more significant than the commercial competitiveness of the product itself. It has established technical standards and design paradigms for mixed reality education applications in the industry.

According to research data in the field of VR education, the user engagement of immersive language learning applications can reach 3-5 times that of traditional applications, and the completion rate of courses has significantly improved. With the popularity of new generation MR devices such as Quest 3, this niche market is entering a period of rapid growth.

Despite demonstrating innovative potential, Spatial Lingo currently faces some technological limitations. The application requires high lighting conditions, and the accuracy of object recognition will decrease in dim environments; The depth and professionalism of AI dialogue content need to be enhanced, making it difficult to meet the needs of advanced learners; The restriction of only supporting access to the United States also restricts the experience opportunities for global users.

The Meta technical team stated that future versions will optimize algorithms to improve recognition performance in low light environments, and introduce more powerful vertical domain language models to support specialized learning scenarios such as business English and academic writing. Meanwhile, the team is evaluating the feasibility of opening up applications to more countries and regions.

From a longer-term perspective, Spatial Lingo represents a microcosm of the integration and application of AI and mixed reality technology. When spatial computing devices become everyday tools, the boundary between the physical world and digital information will further blur, and various scenarios such as education, work, and entertainment will be redefined. Meta accelerates this process through an open source strategy and looks forward to more developers exploring the application possibilities of the space intelligence era together.